New Yandex.Webmaster. What opportunities have become available?

The updated version of Yandex.Webmaster has now been supplemented with a convenient tool that allows you to evaluate the process of indexing by a search engine. This tool is located in the Indexing section, in the Statistics tab. There is a certain algorithm of work, and it is quite simple to use it.

Getting Started: Site Structure

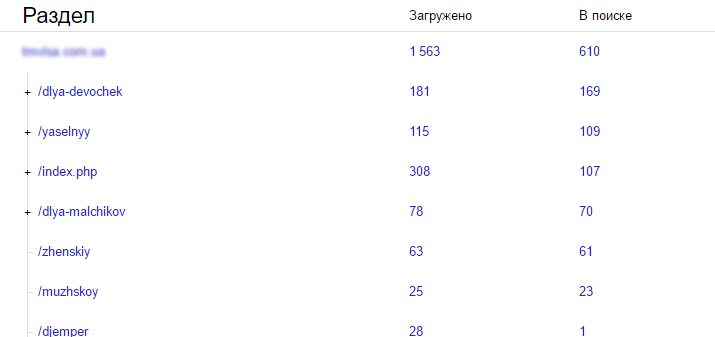

If your site has less than 5,000 pages, then you can start right away with downloading statistical data. If you work with large amounts of information, then they should be streamlined. To do this, make up the structure of the site. An ordered structure helps to get a more complete picture of the project and find its “weak points”.

As a result, after such work, a plate is obtained, where the sections and the corresponding URLs for them are indicated. Then you should check whether the real structure corresponds to the received one. The structure of the Indexing section shows the pages loaded by the search engine. Only large sections of the search base are displayed here. Today, Yandex provides the ability to add up to 5 new sections to the structure, and technical support promises to increase this number if webmasters follow up with mass requests. Now you can add and remove sections as needed.

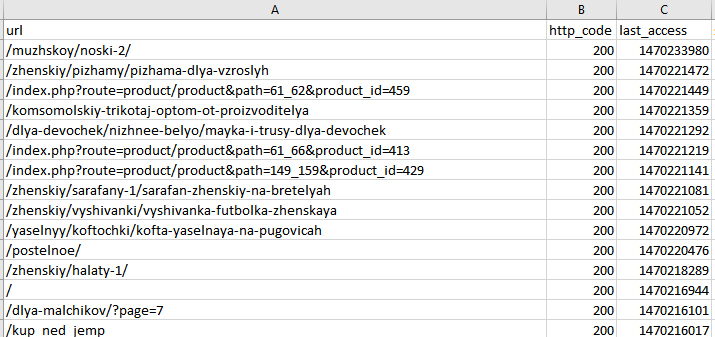

Uploading statistics and researching the information received

To upload data, use Indexing - Statistics. Choose a domain right away when the site is small, and a specific section when the resource is large. To get the issue, use the Download archive button. You will receive *.tsv files. You can open them in Excel. Then you should filter the data and start analyzing it. Use this algorithm:

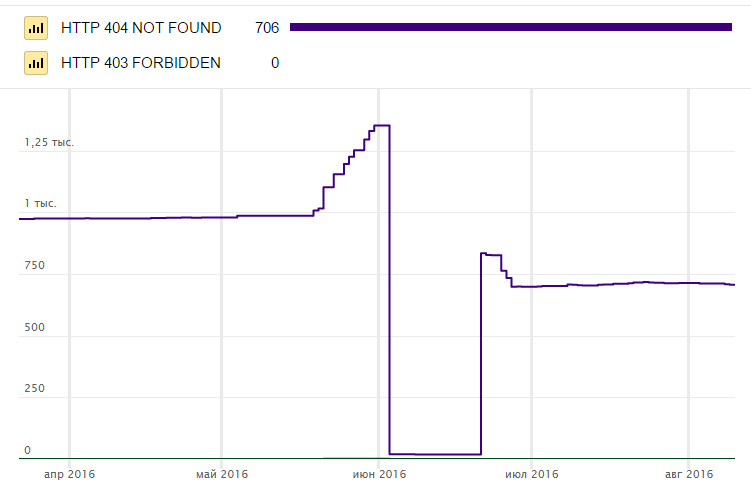

- Select pages with a code other than 200. The codes are located in the http_code column. Understanding what the codes mean is quite simple: 300 - server redirect from one page to another, 400 - non-existent pages, 500 - server errors, "-" - pages were not indexed by the search robot.

- There are pages with a response code of 200, but they are not in the search. You need to set http_code to 200, and searchable to 0. You need to look at the pages that are not participating in the search one by one so that you can understand why Yandex considers them useless.

- Search for elements with code 200 that are included in the search, but in fact are "garbage". These are technical duplicates, and pages left over from the previous structure, search and filter pages, and other "non-format". If you find such pages, think about what you need to do - close them from search or delete them altogether.

Website analysis errors

The most common mistakes at work:

- Detection of temporary server redirect code 302: the page is not removed from the search engine index and is duplicated. If you transfer the page to a new url, then use a 301 redirect;

- 404 - This code is only allowed for pages that have been removed. If the code is given to existing ones, verification is needed;

- 500, server failure: You should definitely monitor the stability of the server. If the site often crashes, uptime is less than 99.5%, then it is worth replacing the server;

- 200 code for non-existent pages: make sure that all non-existent pages return only 404;

- Pages that exist but have a code other than 200. Only pages with a code of 200 are included in the search;

- The presence of pages on the resource that do not have internal incoming links. If even another page of your own resource does not link to the page, then most likely it will not participate in the search. Therefore, you should put down logical internal links where necessary.

As a result of analysis and error checking, you can find pages that uselessly steal traffic, as well as useful pages that could bring traffic, but for some reason do not participate in the search.